[AINews] The Claude Code Source LeakThe accidental "open sourcing" of Claude Code brings a ton of insights.OpenAI’s Largest Fundraise in Human History closed today, growing by a few billion, but disclosing some cool numbers like $24B ARR (growing 4x faster than Google/Meta in their heyday), and also had a “soft IPO” with $3B of investment from rich people and inclusion in ETFs from ARK Invest, although ChatGPT WAU growth seem to has stalled out - they STILL have not crossed the 1B WAU mark targeted for end 2025. Codex also worryingly has not announced a new milestone for March. By far the biggest news of the day is the Claude Code source leak, in itself not particularly damaging for Anthropic, but surely embarrassing and also somewhat educational - Christmas come early for Coding Agent nerds. You can read the many many tweets and posts covering the 500k LOC codebase, and you can browse multiple hosted forks of the source. There are fun curiosities, such as the full verb list, or Capybara/Mythos v8, or the /buddy April Fools feature, or Boris’ confirmed WTF counter, or creating the cursed “Claude Codex”, or the dozen other unreleased features, but most serious players are commenting on a few things. Sebastian Raschka probably has a good list of the top 6:

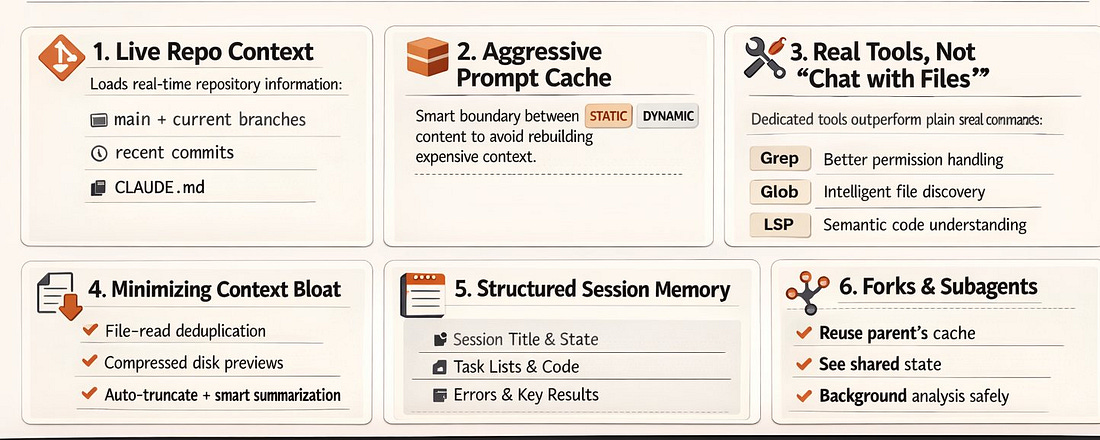

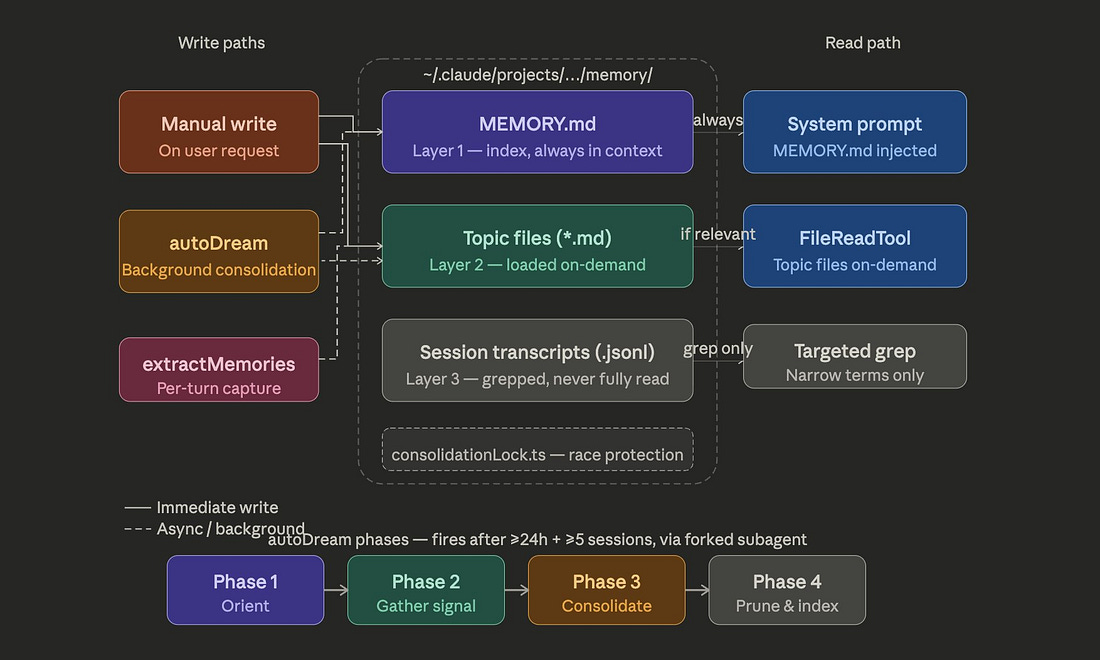

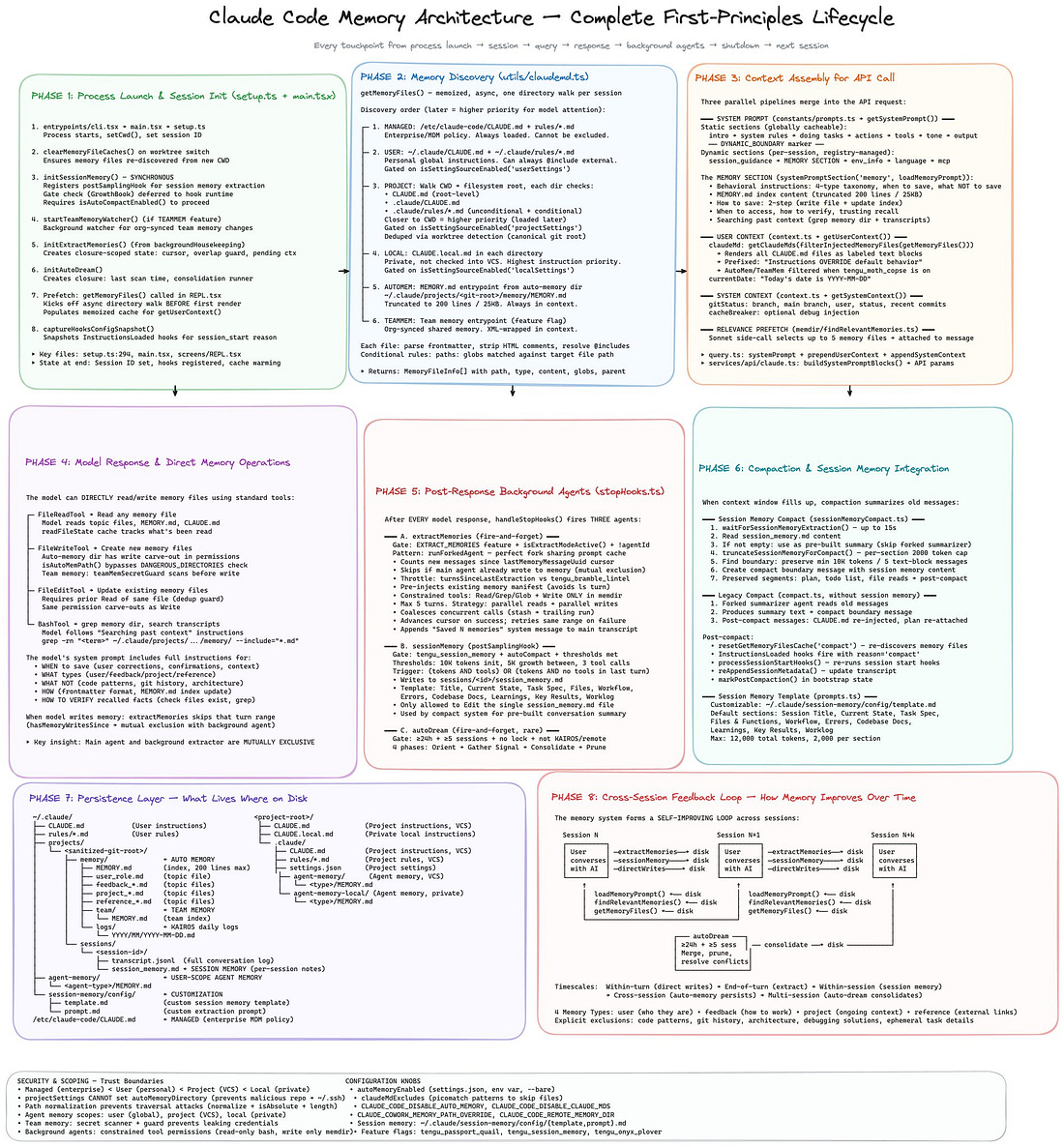

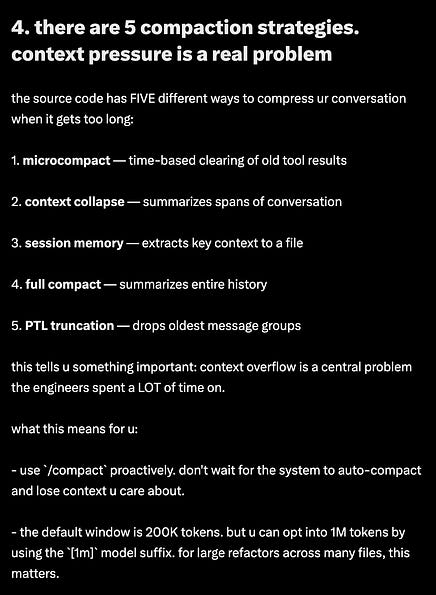

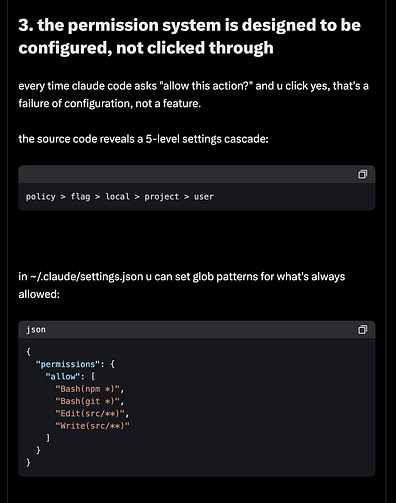

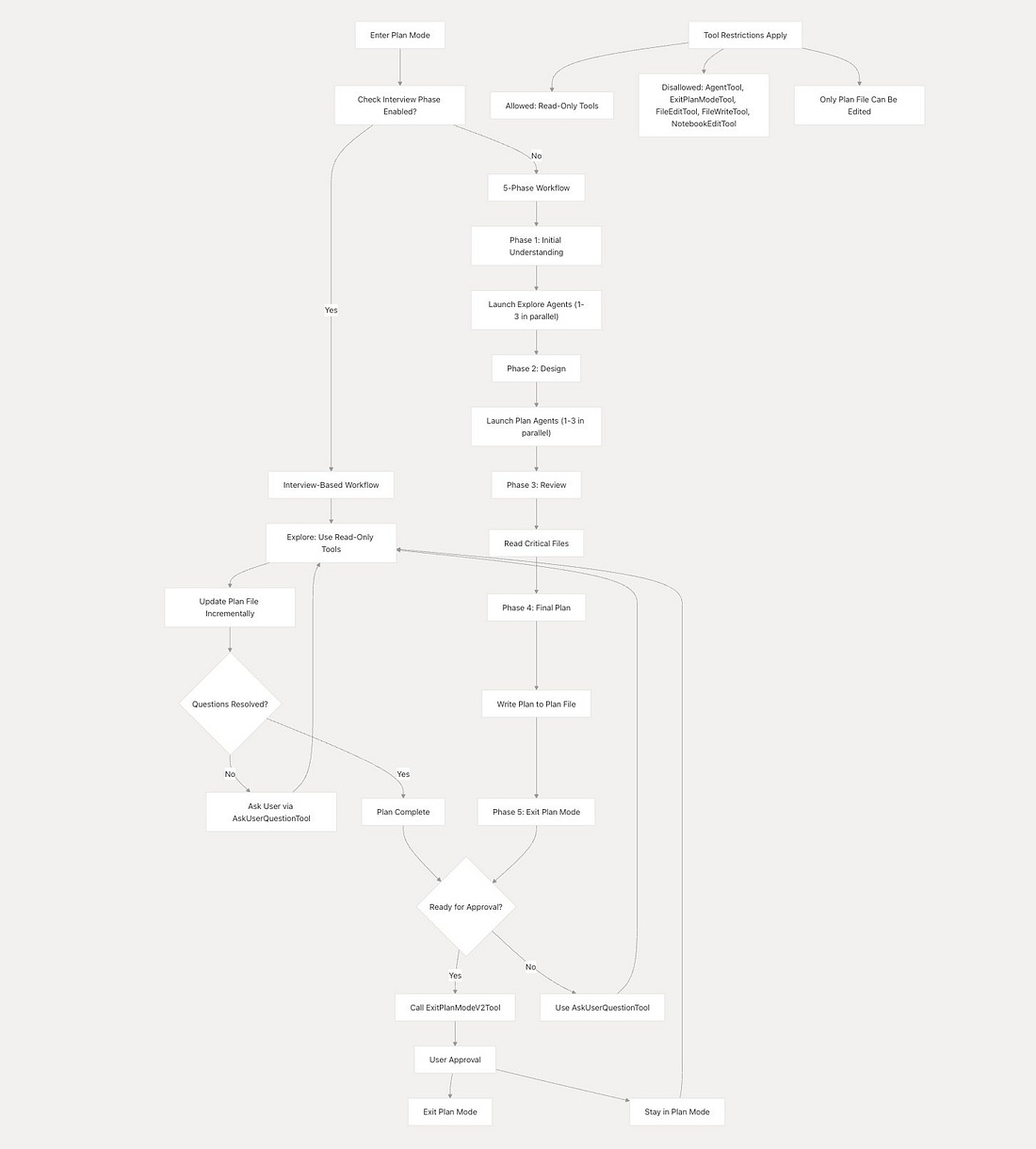

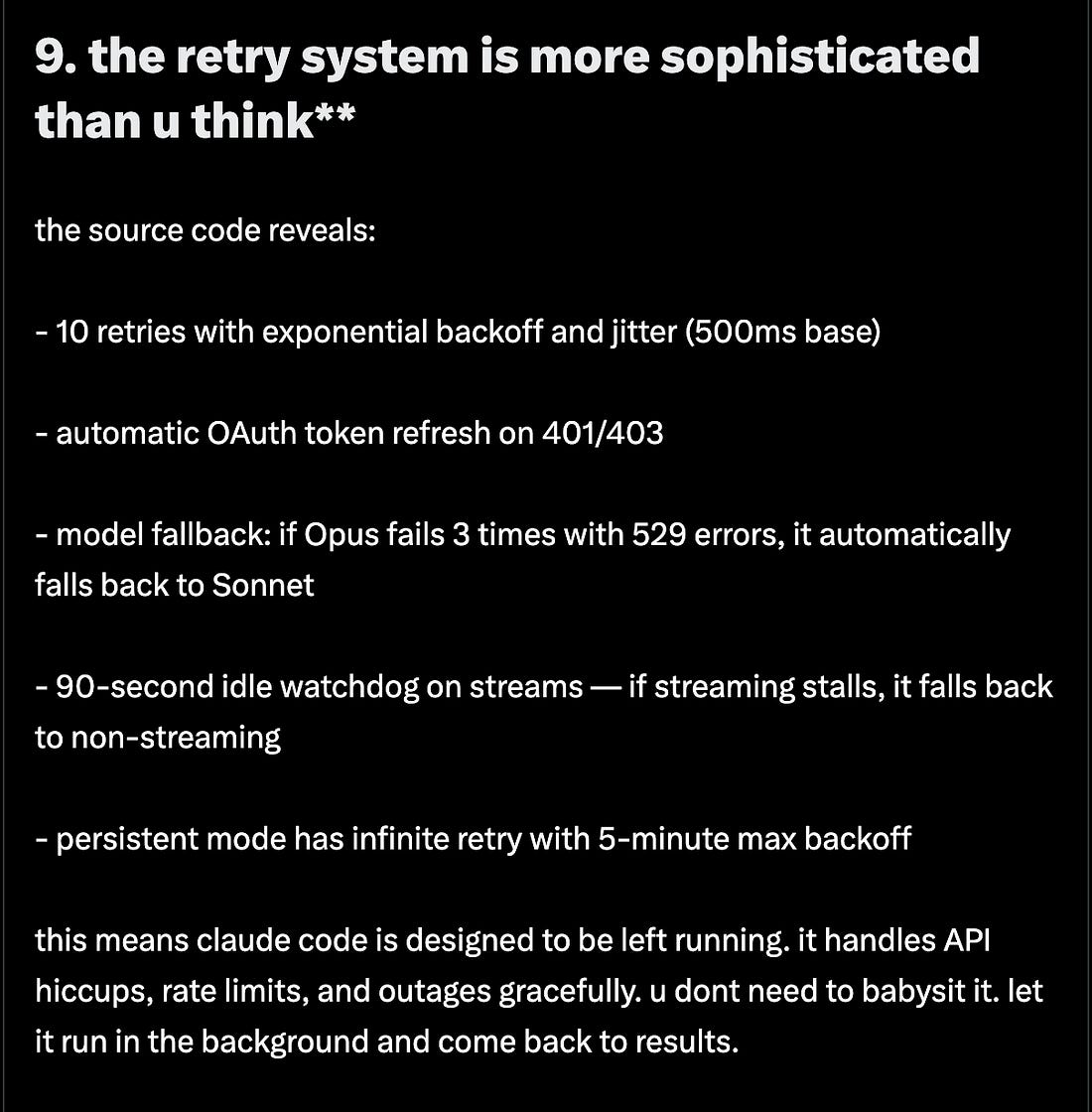

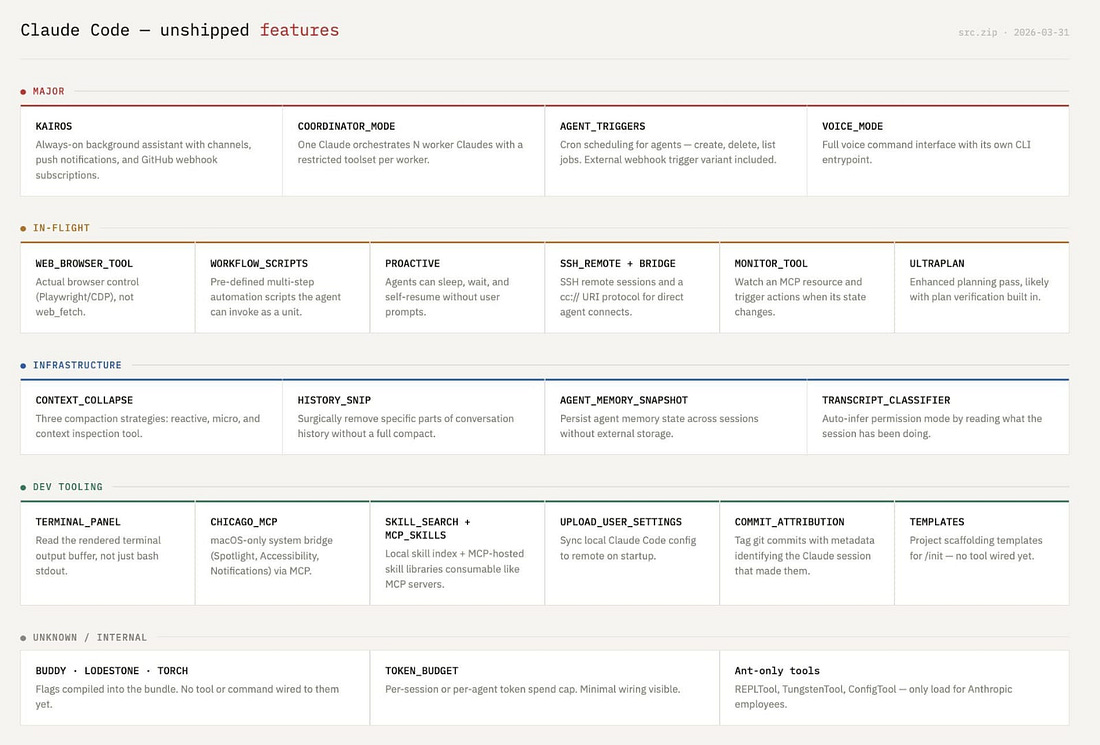

MemoryClaude Code’s Memory has a 3 layer design with 1) a MEMORY.md that is just an index to other knowledge, 2) topic files loaded on demand, and 3) full session transcripts that can be searched. There’s also an “autoDream” mode for “sleep” - merging memories, deduping, pruning, removing contradictions. A deeper analysis from mem0 finds 8 phases: And there are 5 kinds of Compaction: Subagents use Prompt CachingA key feature of CC: they use the KV cache to create a fork-join model for their subagents, meaning they contain the full context and don’t have to repeat work. In other words: Parallelism is basically free. The 5 level Permission SystemThe 2 Types of Plan modehere: Resilience/RetryOther Unreleased/Internal FeaturesIncluding an employee-only gate and an employee TUI, but also a bunch of other stuff in development including ULTRAPLAN and KAIROS:

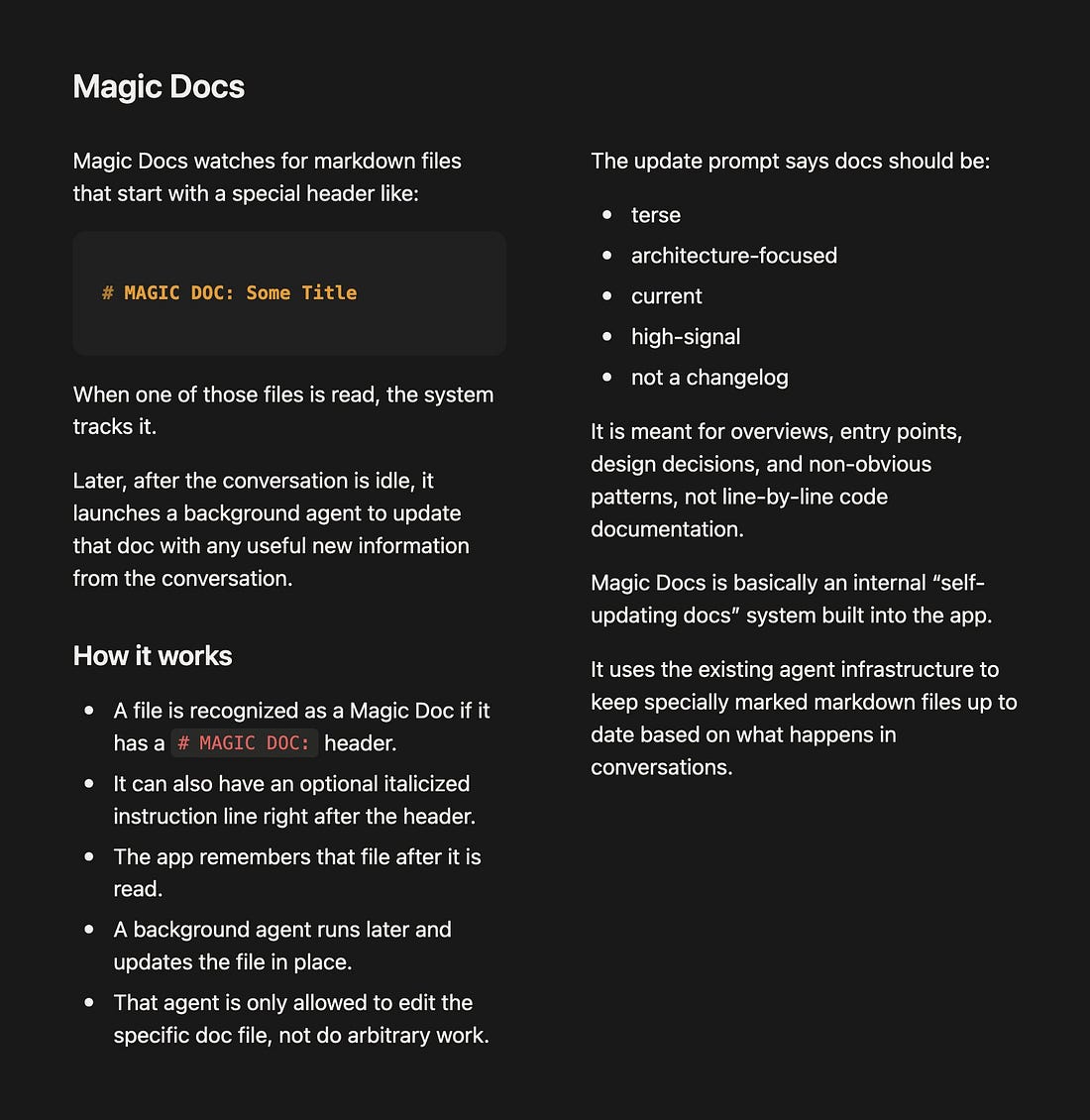

And internal MAGIC DOCS:

AI Twitter RecapTop Story: Claude Code source leak — architecture discoveries, Anthropic’s response, and competitor reactions What happenedClaude Code had substantial source artifacts exposed via shipped source maps / package contents, which triggered rapid public reverse-engineering, mirroring, and derivative ports. The discussion quickly shifted from “embarrassing leak” to “what does this reveal about state-of-the-art agent harness design?” Multiple observers highlighted that the leak exposed orchestration logic rather than model weights, including autonomous modes, memory systems, planning/review flows, and model-specific control logic. Public forks proliferated; one post claimed 32.6k stars and 44.3k forks on a fork before legal fear led to a Python conversion effort using Codex (Yuchenj_UW). Later commentary put the exposed code volume at 500k+ lines (Yuchenj_UW). Anthropic then moved to contain redistribution via DMCA takedowns according to several posters (dbreunig, BlancheMinerva). Separately, a Claude Code team member announced a product feature during the fallout — easier local/web GitHub credential setup via Facts vs. opinionsWhat is reasonably factual from the tweets:... Keep reading with a 7-day free trialSubscribe to Latent.Space to keep reading this post and get 7 days of free access to the full post archives. A subscription gets you:

|

[AINews] The Claude Code Source Leak

Tuesday, 31 March 2026

Subscribe to:

Post Comments (Atom)

No comments:

Post a Comment