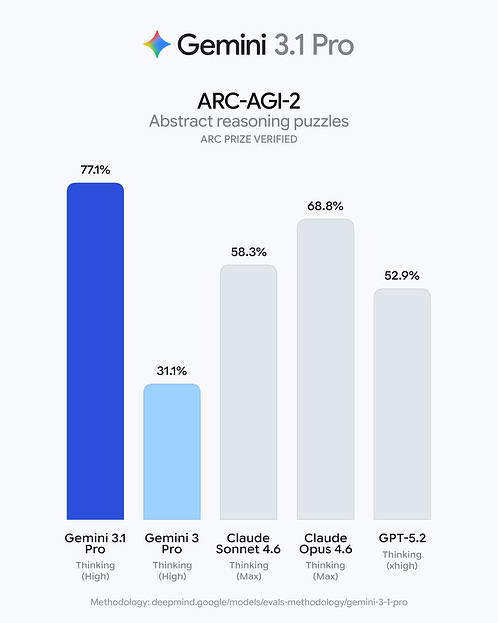

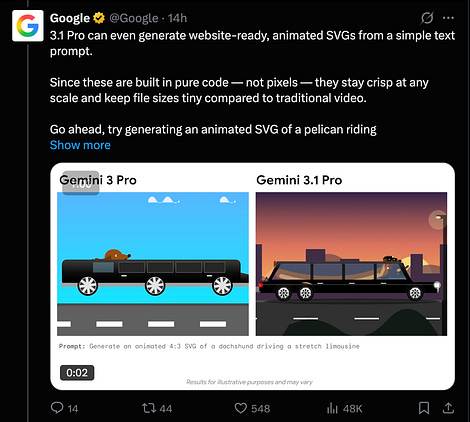

It’s getting a little hard to say interesting things with all the round robin minor version updates of frontier models every week, but Gemini 3.1 Pro seems like a decent enough advance to catch up, and in some cases, supercede, the fellow frontier models (this is surely the reason that 3.1 -had- to be released, because with 5.3 and 4.6 things were seriously falling behind for Google¹) It’s better at some svg design things: and translating textual vibes to visual aesthetics: AI Twitter RecapTop Story: Gemini 3.1 release facts and reactions/opinionsGoogle shipped Gemini 3.1 Pro (generally described as a Preview for developers) and rolled it out across the Gemini app, NotebookLM, Gemini API / AI Studio, and Vertex AI, positioning it as the “core intelligence” from Gemini 3 Deep Think scaled down for practical product use. The announcement emphasized a big reasoning jump—especially ARC-AGI-2 = 77.1%—plus strong coding and agentic-tool benchmarks (e.g., SWE-Bench Verified = 80.6%) and improved hallucination behavior. Independent leaderboards and evaluators largely corroborated top-tier performance and strong cost/intelligence positioning, while reaction threads highlighted (a) excitement about practical gains (SVG/web/UI/code quality, agentic use cases), (b) skepticism about benchmark-targeting and “eval tweeting,” (c) concerns around GDPval (real-world agentic tasks) not leading despite other SOTA scores, and (d) rollout friction: users finding some products (Gemini CLI / Code Assist / Antigravity) unavailable or inconsistently updated at launch. Facts vs. opinionsKeep reading with a 7-day free trialSubscribe to Latent.Space to keep reading this post and get 7 days of free access to the full post archives. A subscription gets you:

|

[AINews] Gemini 3.1 Pro: 2x 3.0 on ARC-AGI 2

Thursday, 19 February 2026

Subscribe to:

Post Comments (Atom)

No comments:

Post a Comment